Talking about alien technologies! However why to forget miraculous exotic propulsion which are supported by our theory but aliens made it practical in our sci-fi movies and novel? WeirdSciences is known for its space dimension theoretical approach and for extraterrestrial life. Here are some new exotic propulsion methodologies and some of them, probably you have never heard of. Be ready for the tour!!

Emergency Warp Power Cell

In desperate situations, it may become necessary for a starship to jettison its warp core to prevent it from being destroyed by a massive antimatter or zero-point explosion. Though at the time it meant immediate survival for the ship and crew, but it also means that without a power generator for the warp drive, a starship will be stranded years to centuries away from anything that can be considered a safe harbour. A rare, yet possible situation can occur if the warp core has been declared irretrievable, a rescue ship cannot reach the ship in distress (SID) in time for some reason, and the ship is trapped in the massive void between stars. This situation will spell disaster for the ship and crew. But a new piece of equipment will replace the lost warp core in this emergency situation and allow the ship to reach a safe haven. The Emergency Warp Power Cell (EWPC).

The EWPC in simplest terms is a large scale matter/antimatter fuel cell, similar to those used on photon torpedo propulsion systems. The fuel cell is designed to be placed on the existing warp power conduits where the missing warp core use to be. It travels up the warp core shaft and is anchored by the same tether beams that held the warp core in place. A typical fuel cell works by injecting antiprotons directly into the plasma power conduits. Energy is conducted in the power conduit by the high speed motion of the plasma particles. As in billiards, the energy is conducted when a moving plasma particle hits a second plasma particle and transfers its kinetic energy to the second particle, the second to the third, third to the fourth, and so on. The antiprotons reacting with the plasma initiates the high kinetic energy transfer. But the EWPC uses a specialized plasma tank for the antimatter reaction to take place without risking unnecessary damage to the warp power conduits. Plasma for the EWPC is provided by ionizing deuterium from the ships storage tanks.

Though the fuel cell is somewhat simpler in design, an antimatter reactor that uses dilithium is far more fuel efficient because dilithium acts as a focusing lens for the annihilation reaction. The fuel cell uses a series of high intensity magnetic containment fields to propel the plasma in the desired direction. These containment fields uses a considerable amounts of power to use, and can lead the fuel cell to generate considerable amounts of heat. Therefore 20% of the fuel cell is a cryogenic cooling system to keep the fuel cell within safe thermal limits.

The antiprotons for the fuel cell doesn’t come from conventional antimatter, but from a stable heavy isotope, specifically element 115. Element 115 is used because when it’s bombarded with high energy protons, the element transmutes into element 116, which is unstable, and decays releasing antimatter particles, which are easily collected. Recycling element 115 is by the use of an atomic resequencer, a component found in industrial replicators. This antimatter fuel source is typically used by the Zeta Reticulans, the aliens species humans use to call the Greys.

Since the fuel cell generates only so much power, the main energizers are disconnected from the warp power matrix, so all the fuel cell’s energy is transferred to the warp nacelles. Critical systems, such as life support, are switched to auxiliary power. Despite its low power output, the fuel cell can generate enough power to slowly accelerate and maintain Warp 4 for a starship and allow it to travel a distance of 10 light-years, depending on the starship. Even though 10 light-years is not a very far distance to travel by early 25th century standards, 10 light-years could allow a starship to reach a star system with a habitable or adaptable planet. This will allow the crew to survive until a rescue ship arrives.[Ref]

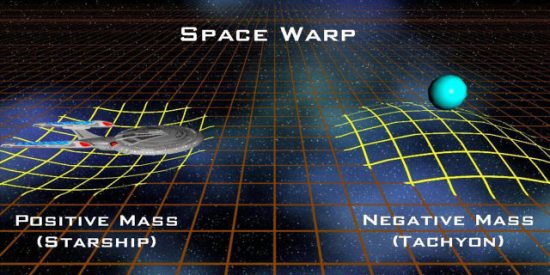

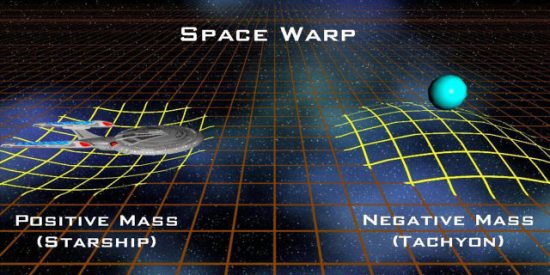

Negative Mass Warp Drive

By the use of a subspace displacement field, starships are capable of travelling faster than the speed of light by catastrophically collapsing the space in front of the ship and expanding the space behind it. But some particles, such as tachyons, are capable of travelling faster than light and exist within normal space because these particles are composed of negative mass.

By the use of a subspace displacement field, starships are capable of travelling faster than the speed of light by catastrophically collapsing the space in front of the ship and expanding the space behind it. But some particles, such as tachyons, are capable of travelling faster than light and exist within normal space because these particles are composed of negative mass.

Whereas normal mass, which are found in planets and starships, generates a depression or trough in the fabric of the space time continuum, prohibiting faster than light travel, negative mass generates a crest in the fabric of the space time continuum, allowing faster than light travel. By making use of this field, Starfleet develops a new mode of propulsion known as the Negative Mass Warp Drive, or Negative Mass Drive. The warp field coils within the nacelles generates a subspace field whose properties “reflects” gravitons in a similar fashion to a mirror reflecting light. The reflected gravitons causes a phase shift in the natural gravitational field of the ship allowing the ship to attain negative mass properties. Resulting in faster than light travel.

The negative mass drive has an advantage over conventional warp drive. Though initially slower than warp drive, with the use of the ship’s impulse engines the negative mass drive can constantly accelerate the ship until its fuel supply is exhausted. Whereas conventional warp has a maximum warp limit. This is because warp drive can only collapse and expand space at a certain rate which leaves this limit. Negative mass drive allows the ship to “exist” beyond the light barrier, and is still subjected to basic physics, such as acceleration.

By reason of power conservation, the engines only reflects 51% of the gravitons generated by the ships mass. Because reflecting 50% of the gravitons will cancel out the effects of the remaining 50% of the un-reflected gravitons, neutralizing the ships space warp. The added 1% allows the ship to travel faster than light. It would make no sense to use 100% power where it is not needed. Unlike quantum mechanics, whose nature is very turbulent, relative physics is very laminar, or smooth, which substantially reduces the risk of a ship being sheared apart by the jump to warp speeds with the negative mass drive. The engines are powered by standard matter/antimatter reactions. Since the engines themselves only requires a certain amount of power, more specifically equivalent to warp 4, the remaining antimatter power is then channelled to the impulse engines to allow the ship to accelerate at a much greater rate. In many cases, ships whose warp power conduits and the impulse engines are almost literally metres apart the ships that normally have the new engines installed. Sometimes even ships whose warp and impulse drives share a common power source, such as an intermix chamber. This results in reduced refit time and reduced modification and redesign of the ships. Such ships include the Intrepid class, Defiant class, Akira class, as well as the retired Enterprise class.

With this new drive, there has been some heated debate as to what happens when the instant the ship jumps to warp. For that instant, the ship attains exactly 50% space inversion, which results in 100% neutral space warp. Most scientists and engineers agree that the ship travels at light speed, warp factor 1. But there are some maverick scientists who believe that for an infinitely small amount of time, the ship attains warp factor 10, infinite velocities. Because of the advantage of constant acceleration, some speculate that within several months of acceleration with the negative mass drive can allow the ship to reach speeds equivalent to transwarp speeds or slipstream velocities.

With this new drive, there has been some heated debate as to what happens when the instant the ship jumps to warp. For that instant, the ship attains exactly 50% space inversion, which results in 100% neutral space warp. Most scientists and engineers agree that the ship travels at light speed, warp factor 1. But there are some maverick scientists who believe that for an infinitely small amount of time, the ship attains warp factor 10, infinite velocities. Because of the advantage of constant acceleration, some speculate that within several months of acceleration with the negative mass drive can allow the ship to reach speeds equivalent to transwarp speeds or slipstream velocities.

Singularity Propulsion

A quantum singularity is usually a naturally occurring phenomenon. Also called a black hole, a quantum singularity is usually a result of a collapsed big star, such as a red supergiant. The quantum singularity emits a large amount of gravitons, rendering escape from the deep event horizon impossible at or below c. Space around the singularity is folded so that imaginary gravity lines (as presented in some diagrams) follow equation f(r)=1/r (a two-quadrant cutaway diagram of the black hole would have f(r)=-|1/r|) up to a point, though I’m not going to get into very specific details.

There is no evidence of what is actually inside a black hole, only that most black holes have “donut singularities.” These singularities are ring-shaped and it is possible that they might connect to another black hole, forming a bridge between to places, two time frames, two universes, or a combination of the three. When the two join, one of them has to become a white hole (the exact opposite of a black hole). Using an analogy, instead of being “the universe’s vacuum cleaner,” it would be like a blow dryer. A white hole expels everything and nothing can get in, only get out. When a black hole and another black hole or a white hole connect, they form a wormhole, although it is only one way, toward the direction of the white hole. The “wall” of the bridge, however, is too narrow for an object bigger than 10 atomic masses (that is including molecules and atoms). So, if there is some kind of an antigravity field (it is probably an antigraviton particle emitter), that would keep the bridge from disconnecting and would keep it wide enough for a vessel to get through. A wormhole, unlike a black hole-to-white hole bridge, serves as a two-way gateway to a different time, place, or universe. A wormhole can be destabilized with antiverterons and antitachyon pulses.

There are three ways to get to a destination (from point A to point B). The “usual” way, in which line AB is distance d. The way of the “worm,” in which line AB is distance d-x (where x is the length of the wormhole and d-x<d). And there is also the “0” way, where space is bent so that the two points, A and B merge to form a new point, C, in which line AB has a distance of zero. Singularity propulsion takes the third road.

Singularity propulsion is one of the first FTL (Faster Than Light) propulsions that a typical civilization would attempt, since this theory usually is the first that a civilization comes up with (think of Stephen Hawking on Earth). The attempt would usually fail, as the civilization does not have the technology to detect very small, to them yet unthinkable particles. That civilization also thinks that a matter-antimatter meeting can tear up the fabric of space, but that is not the way to do that.

In order to commence the singularity propulsion, a ship needs to be in outer space, preferably outside a solar system, in case of an accident. One would also need a graviton, tachyon, verteron, and chroniton generator and their appropriate counterparts to shut the rift down. First, one needs to launch a graviton generator outside of the ship, about 1000km away. Once the generator starts up, one would need to monitor the gravitons carefully, as the emissions tend to increase over time. Once the generator emits a graviton field of 100S (1S = 1 solar mass = Sol), a short burst of antigravitons would make the emissions slow down significantly. A moment later one would need to emit tachyons and verterons simultaneously. Together, tachyons and verterons would open a stable rift. They will connect the point of destination to the point of origin, so that the distance between them is 0. If only verterons are used, the rift will evaporate, since the rift itself would emit verterons. If only tachyons are used, the rift will be stable, but only for a short while. In addition, the generator would burn out. Chronitons do not have to be used unless the travelers want to end up at a some random time in our universe. Chronitons would tie the point of destination’s time to the point of origin’s. All particles, however, have to be of a certain frequency and each sector and time in the universe has a different signature. One can even attempt time travel with this technology.

Some species do have this technology, such as the Q and the Bajoran wormhole prophets. As one gets closer to a singularity, one can obtain god-like powers because that one can exist everywhere, in every universe, time and place, since the 4 dimensions are useless on (or in) the singularity, one can assume that these “godly” beings actually live in the wormhole. They might have lived there their whole lives (they were implanted there) or a ship might have been caught and somehow there were survivors for whom linear time has no meaning. Some species are experienced with linear time enough to understand it, such as Q. Admiral Janeway (“Endgame”) attempted and succeeded in opening a “0” rift linking year 2404 in the Alpha Quadrant to the year 2378 in the Delta Quadrant. The rift was created by using only tachyons and chronitons, so her device burned out.

Tachyon Drive

Tachyons have been talked about, used, and abused in many ways from the conventional, such as communications, to the absurd, such as creating an anti-time anomaly. In 2504, it is the first time that this common faster-than-light particle is used for propulsion. It was first truly theorized in 2371 when commander Benjamin Sisko and his son Jake recreated a Bajoran solar sailing ship which legend says was able to reach Cardassian space. This ship was caught in tachyon eddies in the Denorios belt pushing it to warp speeds due to the unique design of the sailing ship.

Tachyons have been talked about, used, and abused in many ways from the conventional, such as communications, to the absurd, such as creating an anti-time anomaly. In 2504, it is the first time that this common faster-than-light particle is used for propulsion. It was first truly theorized in 2371 when commander Benjamin Sisko and his son Jake recreated a Bajoran solar sailing ship which legend says was able to reach Cardassian space. This ship was caught in tachyon eddies in the Denorios belt pushing it to warp speeds due to the unique design of the sailing ship.

In theory tachyons are capable of traveling faster than light speed and being able to exist in normal space is because this particle has negative mass. Conventional mass, such as what makes up the matter in starships, cannot travel at light speed, let alone faster than light speeds. Light itself has no mass and therefore can travel at light. However negative mass, which is on the other side of the light scale, can travel faster than light.

Some have speculated that by collecting and storing enough tachyons, this will neutralize the ships natural mass to allow it to travel faster than the speed of light. However, in order to neutralize enough mass for warp 1 travel, light speed travel, a 4.5 million metric tonne Galaxy class starship would need to store -4.5 million metric tonnes of tachyon particles. And even more for it to travel beyond warp 1. This is even more difficult since tachyons cannot simply be stored, in essence, the same way deuterium can be stored.

The dream of a tachyon drive however had not died. The old M2P2 drive of the 21st century, like those used on the old DY starships, uses a solar powered electromagnetic field to gather ionized gases from the sun, and have the solar winds push the newly formed plasma field along with the ship like an electromagnetic sail.  A spatial distortion field would be used in a similar fashion to the M2P2 drive to collect enough of the free tachyon particles in the field, concentrate them, and use their negative mass field to cancel out the ships mass as well as allow the ship to travel faster than the speed of light.The tachyon drive would be simpler than conventional warp drive because it doesn’t have to maintain a proper balance between the 2 warp nacelles and won’t need to cause a deliberate imbalance in the warp field to steer the ship at warp. Steering with tachyon drive can be done by the impulse engines. In relative size, it would be more conceivable for small or medium size ships, from shuttle pods to no bigger than the Phoenix class starships, to use the tachyon drives. But it is possible for the heaviest federations ships, such as the Galaxy class and Pelagic class ships, to use this drive.

A spatial distortion field would be used in a similar fashion to the M2P2 drive to collect enough of the free tachyon particles in the field, concentrate them, and use their negative mass field to cancel out the ships mass as well as allow the ship to travel faster than the speed of light.The tachyon drive would be simpler than conventional warp drive because it doesn’t have to maintain a proper balance between the 2 warp nacelles and won’t need to cause a deliberate imbalance in the warp field to steer the ship at warp. Steering with tachyon drive can be done by the impulse engines. In relative size, it would be more conceivable for small or medium size ships, from shuttle pods to no bigger than the Phoenix class starships, to use the tachyon drives. But it is possible for the heaviest federations ships, such as the Galaxy class and Pelagic class ships, to use this drive.

Travel through Hyperspace

Hyperspace, what is that anyway? Generally, hyperspace is a space of higher dimensions, meaning dimensions beyond the three known dimensions of space and the one of time. These “higher” dimensions are outside the limits of our perception and, for the most part, of our understanding too. Nevertheless, the principle may be explained with a simple example: Let us assume that we are small, two-dimensional worms, and that we are crawling on an infinitely large sheet of paper. We live in peace, and everything is as usual. But one day, a smart physicist devises the idea that there might be a third dimension aside from the two well-known dimensions of our sheet of paper. If he were able to climb into a rocket and leave for the third dimension, he would disappear in the eyes of his fellow worms, as soon as he would leave the paper surface. He could then, without any reasonable explanation, reappear at any other place of the surface. It is quite similar with our hyperspace. But more about that later.

For a long time, hyperspace travel has been of little interest to the spacecraft engineers of Starfleet until 2381, just one year ago, Federation archaeologists discovered the remains of a long extinguished civilization in the Ventana system at the edge of the Beta Quadrant. After deciphering the millennia old databanks, scientists faced an incredible amount of data about hyperspace and ways of taking advantage of it. Apparently, they have discovered a civilization that had unveiled one of the last secrets of our universe, the physics of higher dimensions.

Theory

The following paragraph illustrates the course of a travel through hyperspace, as it could be done on Federation starships. To get into hyperspace in the first place, a “window” needs to be opened to the higher dimensions, virtually lifting the ship into hyperspace. The creation of such a gate would be very energy consuming and complex. Actually, that much energy is required, as cannot be provided by any available or known source to date. But in order to circumvent this problem, engineers may apply a little trick. They “borrow” energy from the vacuum. We owe our thanks to a variant of Heisenberg’s Uncertainty Principle. The Uncertainty Principle doesn’t only apply to the location and velocity of a particle, but also to its energy and the time over which it has this energy. The formula is: h ~ E x t (the Planck constant is approximately the energy times the time). Thus, an amount of energy E must be “paid back” (transferred into the vacuum) after a time t. This means that the more energy is borrowed, the sooner it must be given back in order not to violate energy conservation. But this circumstance alone would not suffice to enable hyperspace travel, as the window would collapse as soon as the energy would be given back, so the net result would be zero.

The following paragraph illustrates the course of a travel through hyperspace, as it could be done on Federation starships. To get into hyperspace in the first place, a “window” needs to be opened to the higher dimensions, virtually lifting the ship into hyperspace. The creation of such a gate would be very energy consuming and complex. Actually, that much energy is required, as cannot be provided by any available or known source to date. But in order to circumvent this problem, engineers may apply a little trick. They “borrow” energy from the vacuum. We owe our thanks to a variant of Heisenberg’s Uncertainty Principle. The Uncertainty Principle doesn’t only apply to the location and velocity of a particle, but also to its energy and the time over which it has this energy. The formula is: h ~ E x t (the Planck constant is approximately the energy times the time). Thus, an amount of energy E must be “paid back” (transferred into the vacuum) after a time t. This means that the more energy is borrowed, the sooner it must be given back in order not to violate energy conservation. But this circumstance alone would not suffice to enable hyperspace travel, as the window would collapse as soon as the energy would be given back, so the net result would be zero.

The second lucky circumstance is that the creation of a gate would require much more galactic energy than its maintenance, for which a normal matter/antimatter reaction may be sufficient. Therefore, the sequence of events may be as follows: We borrow and amount of energy, Ev. With the energy from the reactor, Er , plus Ev we obtain the total energy Et that is necessary to build the gate: Ev + Er = Et. Then we return the energy Ev in a time t = h/Ev, and what remains is the energy Er from the reactor, just sufficient to maintain the window. This way, we achieve a maximum effect with a minimum amount of “true” energy.

Implementation

So far for the theory, now for the practice: The starship or an outpost launches, with a conventional photon torpedo launcher, a beacon with an M/AM reactor and a so-called collector, capable of borrowing energy from the vacuum. This beacon builds up the window in the above explained fashion and keeps it up for about one minute. But the starship may not yet enter into hyperspace. This is because the higher-dimensional space is being almost completely evacuated as soon as the gate is opened. No one can yet explain why this is so. Anyway, this would force all starships already in hyperspace to leave it here and now, irrespective of their planned destination. To avoid this effect, a certain neutrino imprint is applied to the window during its creation, each ship having its individual imprint. But why neutrinos of all particles? The reason is that neutrinos interact in a special way with the gate and are able to leave something like a finger print. Now, only starships with a matching print may pass the gate. Without this technology, only ships going from one system into the same system could be in hyperspace at the same time. The imprint also plays an important role when leaving hyperspace. More about that later.

As soon as we are in hyperspace, the second step is taken. In every important system (e.g. Sol) gravitation wave emitters and receivers have been positioned, as well as an array of the above described beacons. These emitters permanently generate gravitational waves of a certain frequency, as it is the only type of waves capable of propagating in hyperspace (light, for instance, is not). The starship in hyperspace receives these frequencies of the systems and replies with the specific frequency of the destination system. In addition, it transmits, coded in gravitational waves, a data package that is received in all systems. As this is received by the receiver of the destination system, a window to hyperspace may be created as described above, with exactly the neutrino imprint of the starship. The ship is hurled out of subspace and finds itself at the destination. One thing remains to be mentioned: The starship in hyperspace may open a gate itself, but the place of exit would be completely coincidental. It could emerge anywhere in the universe.

Nanotechnology only exacerbates the situation. We expect full- nanotech, uploading, AIs etc to arrive before interstellar travel becomes practical. Assume we keep the same dimensions for our bodies and brains as at the moment. Once we are uploaded onto a decent nanotech platform our mental speeds can be expected to exceed our present rates by the same factor as electrical impulses exceed the speed of our neurochemical impulses – about a million. Subjective time would speed up by this factor. Taking a couple of subjective-years as the limit beyond which people would be reluctant to routinely travel this defines the size of a typical trade zone / culture as not exceeding a couple of light minutes. Even single stellar systems would be unable to form a single culture/trade zone. The closest planet then would seem further away than the nearest star today.

Nanotechnology only exacerbates the situation. We expect full- nanotech, uploading, AIs etc to arrive before interstellar travel becomes practical. Assume we keep the same dimensions for our bodies and brains as at the moment. Once we are uploaded onto a decent nanotech platform our mental speeds can be expected to exceed our present rates by the same factor as electrical impulses exceed the speed of our neurochemical impulses – about a million. Subjective time would speed up by this factor. Taking a couple of subjective-years as the limit beyond which people would be reluctant to routinely travel this defines the size of a typical trade zone / culture as not exceeding a couple of light minutes. Even single stellar systems would be unable to form a single culture/trade zone. The closest planet then would seem further away than the nearest star today.

The probe remains in contact with the home base, throughout the trip. As a drop point approaches another wormhole plus deceleration rig would be loaded through to detach itself from the mother craft. Deceleration would likely be quicker and less expensive than acceleration because the daughter craft could brake itself against interstellar/galactic gas, dust and magnetic fields. For energy cost reasons it is not likely that transfer of colonists would begin until deceleration is complete.

The probe remains in contact with the home base, throughout the trip. As a drop point approaches another wormhole plus deceleration rig would be loaded through to detach itself from the mother craft. Deceleration would likely be quicker and less expensive than acceleration because the daughter craft could brake itself against interstellar/galactic gas, dust and magnetic fields. For energy cost reasons it is not likely that transfer of colonists would begin until deceleration is complete.